Solar & Battery Fan DIY STEM Kit

$9.99$5.95

Posted on: Mar 3, 2005

A full-scale quantum computer could produce reliable results even if its components performed no better than today’s best first-generation prototypes, according to a paper in the March 3 issue in the journal Nature* by a scientist at the Commerce Department's National Institute of Standards and Technology (NIST).

In theory, such a quantum computer could be used to break commonly used encryption codes, to improve optimization of complex systems such as airline schedules, and to simulate other complex quantum systems.

A key issue for the reliability of future quantum computers—which would rely on the unusual properties of nature’s smallest particles to store and process data—is the fragility of quantum states. Today’s computers use millions of transistors that are switched on or off to reliably represent values of 1 or 0. Quantum computers would use atoms, for example, as quantum bits (qubits), whose magnetic and other properties would be manipulated to represent 1 or 0 or even both at the same time. These states are so delicate that qubit values would be unusually susceptible to errors caused by the slightest electronic 'noise.'

To get around this problem, NIST scientist Emanuel Knill suggests using a pyramid-style hierarchy of qubits made of smaller and simpler building blocks than envisioned previously, and teleportation of data at key intervals to continuously double-check the accuracy of qubit values. Teleportation was demonstrated last year by NIST physicists, who transferred key properties of one atom to another atom without using a physical link.

'There has been a tremendous gap between theory and experiment in quantum computing,” Knill says. 'It is as if we were designing today's supercomputers in the era of vacuum tube computing, before the invention of transistors. This work reduces the gap, showing that building quantum computers may be easier than we thought. However, it will still take a lot of work to build a useful quantum computer.'

Use of Knill's architecture could lead to reliable computing even if individual logic operations made errors as often as 3 percent of the time—performance levels already achieved in NIST laboratories with qubits based on ions (charged atoms). The proposed architecture could tolerate several hundred times more errors than scientists had generally thought acceptable.

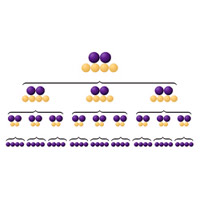

Knill’s findings are based on several months of calculations and simulations on large, conventional computer workstations. The new architecture, which has yet to be validated by mathematical proofs or tested in the laboratory, relies on a series of simple procedures for repeatedly checking the accuracy of blocks of qubits. This process creates a hierarchy of qubits at various levels of validation.

For instance, to achieve relatively low error probabilities in moderately long computations, 36 qubits would be processed in three levels to arrive at one corrected pair. Only the top-tier, or most accurate, qubits are actually used for computations. The more levels there are, the more reliable the computation will be.

Knill’s methods for detecting and correcting errors rely heavily on teleportation. Teleportation enables scientists to measure how errors have affected a qubit’s value while transferring the stored information to other qubits not yet perturbed by errors. The original qubit’s quantum properties would be teleported to another qubit as the original qubit is measured.

The new architecture allows trade-offs between error rates and computing resource demands. To tolerate 3 percent error rates in components, massive amounts of computing hardware and processing time would be needed, partly because of the “overhead” involved in correcting errors. Fewer resources would be needed if component error rates can be reduced further, Knill’s calculations show.

'There must be no barriers for freedom of inquiry. There is no place for dogma in science. The scientist is free, and must be free to ask any question, to doubt any assertion, to seek for any evidence, to correct any errors.'

'There must be no barriers for freedom of inquiry. There is no place for dogma in science. The scientist is free, and must be free to ask any question, to doubt any assertion, to seek for any evidence, to correct any errors.'